Yeah, I’ve been slacking lately on the project. School has been keeping me busy, work too.

My current problem is that something seems up with the data checksum routine, the code on the PC that is processing the data. The SX code seems fine, and I’m getting very reliable data, both in terms of the length of the data (exactly 1088 bytes between headers consistently), and in terms of the actual data.

My tests in October were showing that although I received the data perfectly correct (verified by looking on the Amiga in DiskMonTools at the actual real original data, byte for byte), the data checksum was failing.

The best I can figure out so far is that one of a couple things is happening. Either 1> the data isn’t being stored properly in the array, ie its shifted by a byte somehow or 2> the checksum routine is reading the data FROM the array incorrectly. I know the data is RIGHT, so it’s just a matter of getting the darn checksum routine to realize it.

The problem may lie in the difference in variable sizes between an old school compiler (like borland or something) and .NET. We’ve seen this crop up earlier. I’m weakest on understanding exactly what those differences are, and what the easiest way is to fix them. It doesn’t help that the checksum routines are semi-cryptic — or at least written in the “let’s write this the most efficient and smallest way possible” format of old school c programmers. Forget about readability, let’s make it fast and small.

Read the next post (the previous post, that is) for details from the last session.

Hey, nice to see you again!

On checksum routines: maybe it is worth writing these from the scratch. At least you’d be able to debug them knowing precisely what, why and where you do.

Similar to my failure in using readily available routines from some Amiga emulation code; eventually I came up to rewriting those entirely, mainly for the reason they weren’t as fast as I needed, and another reason being that the resulting MFM flow actually wasn’t MFM at all.

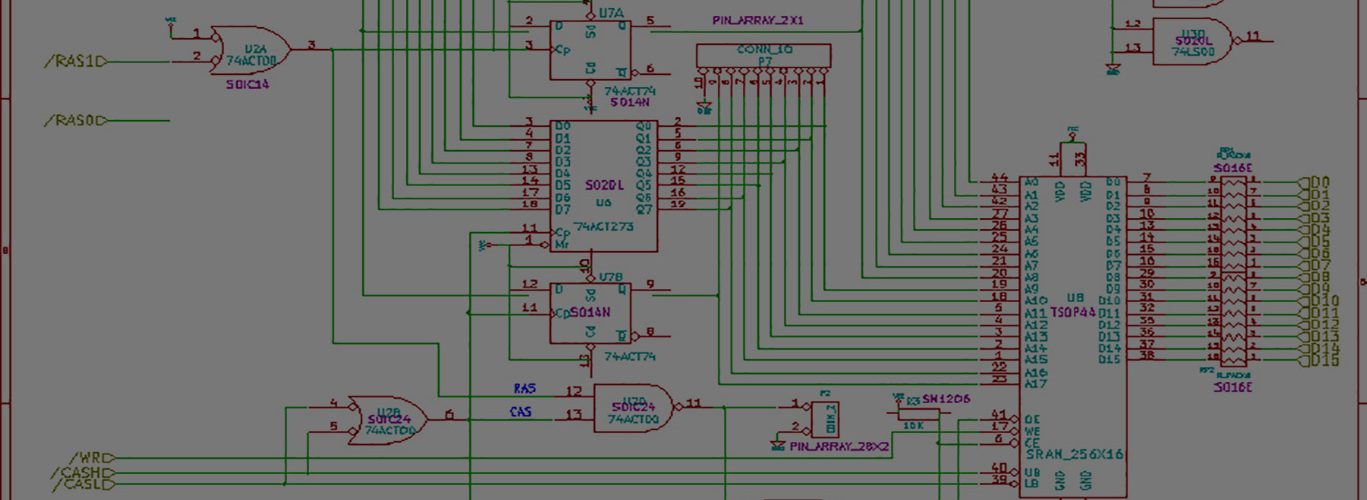

I haven’t worked on my project for quite a while, too. Got my hands on it only this Monday, and it shows some reassuring progress signs. The code loads ADF from flash, although cheating a lot (not dealing with FAT long filenames, subdirs, robust cluster walking etc), then converts it into thick MFM image using good 1.9M out of 4M DRAM available, and even delivers tracks 0, 2 and 4 (only three and only even for debugging purposes) to Amiga correctly with respect to STEP pulses. Will have to involve SIDE signal, and do all tracks also this night.

Hey, nice to see you as well!

I’ve considered rewriting these routines, but I’m not so sure I can find a definitive source for the right algorithm. I can go off of sample code, but in that case, if I’m going to trust them, I may as well trust their code.

I remember your MFM generation problems….. that was from floppy.c, right?

Sounds like your project is progressing nicely. Good job.

Yeah I’m struggling here with the checksum. I manually checked the data, byte for byte, and it’s perfect, but in some cases the routines I’m using from AFR are simply failing — they aren’t coming up with the stored checksum. Perhaps the stored checksum is being read incorrectly? I don’t know offhand if the checksum is checksummed itself in the header 🙂

The first 24 bytes of the payload(aka data) portion of my each sector appears to be the OFS header. Neat to see that. Each sector has 488 bytes of real data.

Yes, those were routines from floppy.c and around.

No, checksum fields aren’t checksummed themselves 🙂